Welcome to the Halftime Show.

It's spring in Shenzhen, which means peak humidity (and already 30 degrees)- sitting at an ABSURD 85%. As someone raised in the temperate coast of Canada, I miss dry air more than I can explain.

Aside from that, Shenzhen is buzzing as always…

I moved here because I believe the most important technology story in the world is being built in this city, largely without international observers in the room. Last Tues, something went live here that made me feel that more acutely than I have in weeks.

Let's get into it.

PLAY OF THE WEEK

Huawei unveils SuperPoD in… Barcelona?

via Bloomberg

In Issue 12, we covered China’s FYP’s four layer framework: 模芯云用: model, chip, cloud, application.

Washington's export controls target layer two, aka the chip, via Nvidia. We all know Nvidia controls roughly 90% of the global market, powering EVERY major frontier model in the world.

So no chips = no training runs = no frontier models.

It was the most precise lever available. And for a moment, it worked.

This past week, Huawei unveiled SuperPoD in Barcelona, and according to a journalist at Bloomberg.. “occupying the bulk of Hall 1- Huawei overshadowed every other telecom exhibitor with its sheer size and presence.”

The SuperProD is not a chip, but a complete AI infrastructure system.

SCOUT REPORT

SCOUT REPORT

→ Huawei debuted the Atlas 950 SuperPoD globally at MWC Barcelona A SuperPoD is a data center in a box- thousands of AI chips wired so tightly they behave as a single computer. The Atlas 950 connects up to 8,192 chips this way. (SCMP)

→ Shenzhen activated China's first large scale AI cluster on 100% domestic chips, March 31. 11,000 petaflops- the equivalent of 5.5 million personal computers running simultaneously. Combined with Phase 1, Shenzhen now runs 14,000 petaflops of domestic AI compute. 92% booked before launch. (SCMP)

→ ByteDance committed $5.6 billion in Huawei chip orders for 2026. ByteDance-parent of TikTok, 1.5 billion monthly active users- is building its entire AI infrastructure on domestic silicon. For context: Nvidia's total China revenue in 2024 was roughly $3-4 billion. (Reuters)

→ DeepSeek V4 confirmed to run on Huawei Ascend chips. DeepSeek's previous models ran on Nvidia. V4 will not. First frontier AI model built to run on China's domestic silicon stack. (Reuters)

→ Huawei published its first ever public three year chip roadmap. New chip generations in 2026, 2027, and 2028- each doubling compute power and memory. Huawei has never done this before. (Tom's Hardware)

FILM ROOM

How to turn your constraint into a competitive advantage

The monopoly

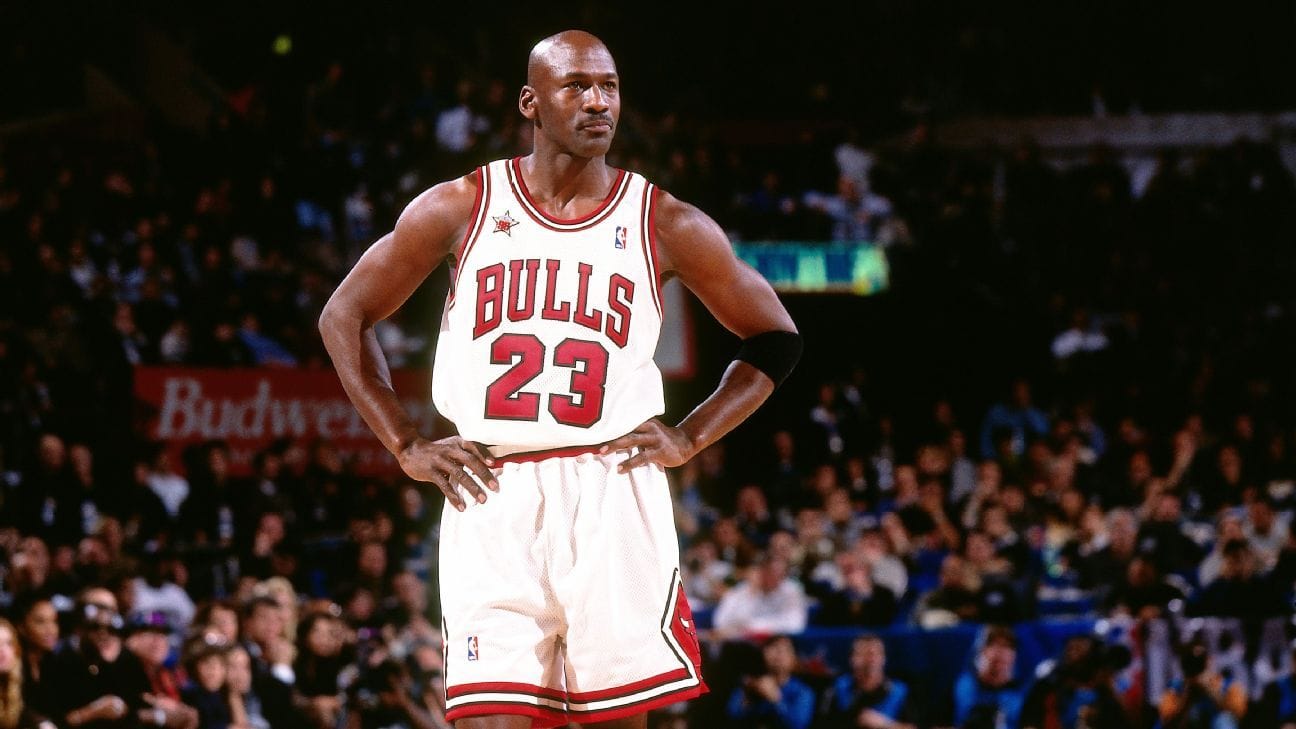

Nvidia owns two things: the best chips in the world, and the software ecosystem built around them. This is Jordan's Bulls- except imagine the Bulls also wrote the rulebook for the entire NBA…

The chips- H100, H200, Blackwell- are all export controlled out of China. The most powerful chip still legally available there is the H20, a deliberately downgraded export version.

The software is CUDA. Every AI lab in the world builds on it. Switching to a different chip means rewriting millions of lines of code. That switching cost is what kept Nvidia's position intact even after the hardware was cut off.

SuperPoD: The different game

Huawei's answer is architectural rather than incremental.

The Atlas 950 SuperPoD connects 8,192 Ascend chips via UnifiedBus- a high speed internal network that moves data between chips 62x faster than Nvidia's equivalent.

The result: thousands of chips behave as a single logical computer rather than individual machines, compensating for what each individual chip lacks. Think of it as a data center in a box.

The individual Ascend chip still trails Nvidia's best. The Ascend 910C delivers roughly 60% of H100 inference performance. The training gap is wider.

But Huawei isn't trying to win chip for chip. Instead, it's building a different game:

CANN Next: a software layer compatible with CUDA, so developers can migrate without rewriting from scratch

MindSpore: Huawei's domestic alternative to PyTorch, the industry standard AI development framework

Atlas 950 SuperPoD: the physical cluster that brings all three together at data center scale

Not identical to Nvidia's stack. But close enough that ByteDance wrote a $5.6 billion check…

The arena opens

March 31st, six weeks after the 15th Five Year Plan was approved.

Shenzhen switched on 11,000 petaflops of AI compute, equivalent to 5.5 million personal computers running simultaneously- on 100% domestic hardware. No Western components anywhere in the stack.

92% was booked before launch.

Target: 80,000 petaflops by end of 2026. A global AI computing hub by 2028.

Huawei didn't unveil the Atlas 950 in Shenzhen. They took it to MWC Barcelona- positioning it as a global product for any government or enterprise that wants AI infrastructure without US dependencies.

The 5YP is live

The 15th FYP measured success not by chip production but by how deeply AI computing penetrates the economy.

The Shenzhen cluster is that penetration- in the city I live in, on domestic hardware, six weeks after the plan was approved.

All four layers, all moving simultaneously:

Chip: Huawei's Ascend roadmap, 750,000 units shipping in 2026

Cloud: Shenzhen's cluster live and oversubscribed, Alibaba opening a new data center on domestic chips

Application: ByteDance's $5.6B order, Chinese hyperscalers redirecting their entire infrastructure spend

Model: DeepSeek V4, the most anticipated AI release of 2026, confirmed to run on Huawei silicon

The plan described an architecture, and Shenzhen is that architecture, built and running.

Our read

The CFR estimates that by 2027, leading US AI chips could be 17x more powerful than Huawei's best. The software ecosystem gap is real and structural, and it likely won’t close for a while.

But-

Huawei doesn't need to match Nvidia's peak performance to reshape the market. It needs to be good enough for the governments, enterprises, and hyperscalers that want sovereign AI infrastructure and/or can’t access Western supply chains.

ByteDance didn't commit $5.6 billion on pity. DeepSeek V4 didn't choose Huawei silicon out of compromise- it chose it because the stack is ready to roll.

Export controls were designed to contain China's AI ambitions at the hardware layer. What they actually did was force the construction of a parallel infrastructure ecosystem with its own chips, software, clusters, and customers.

The containment strategy created exactly what it was designed to prevent.

STAT OF THE WEEK

$5.6 billion

ByteDance's committed Huawei Ascend chip spend in 2026 alone. For context: Nvidia's entire China revenue in 2024 was roughly $3-4 billion.

See you next week,

Jen, live from Shenzhen