Welcome to the Halftime Show.

Caught up with an ex colleague on Monday, a SWE at Brex. Asked him what people in SF were saying about the model wave. His answer: "what model wave”

Good. This shit keeps me going. It’s why I breathe.

Let's lock in.

PLAY OF THE WEEK

Keeping up IS the sport

Six (almost all) frontier level launches in roughly fourteen days from five separate Chinese labs:

Kimi K2.5 (Moonshot AI) on January 27

GLM-5 (Zhipu AI) and MiniMax M2.5 (MiniMax) on February 11

Seedance 2.0 (Bytedance) on February 12

Doubao 2.0 (Bytedance) on February 14

Qwen 3.5 (Alibaba) on February 16

Covering every layer of the stack: video generation, coding, agentic workflows, reasoning, multimodal enterprise.

The iteration speed is truly cracked. It tells you about where the talent, the infrastructure, and the competitive pressure actually lives right now.

The crazier thing is, each model is good enough to headline its own news cycle. So what does it mean to compete in a market that moves this fast?

SCOUT REPORT

→ Five frontier models. Fourteen days. Every layer of the stack. Good benchmark breakdown on each from Interconnects (Hugging Face / Interconnects.ai)

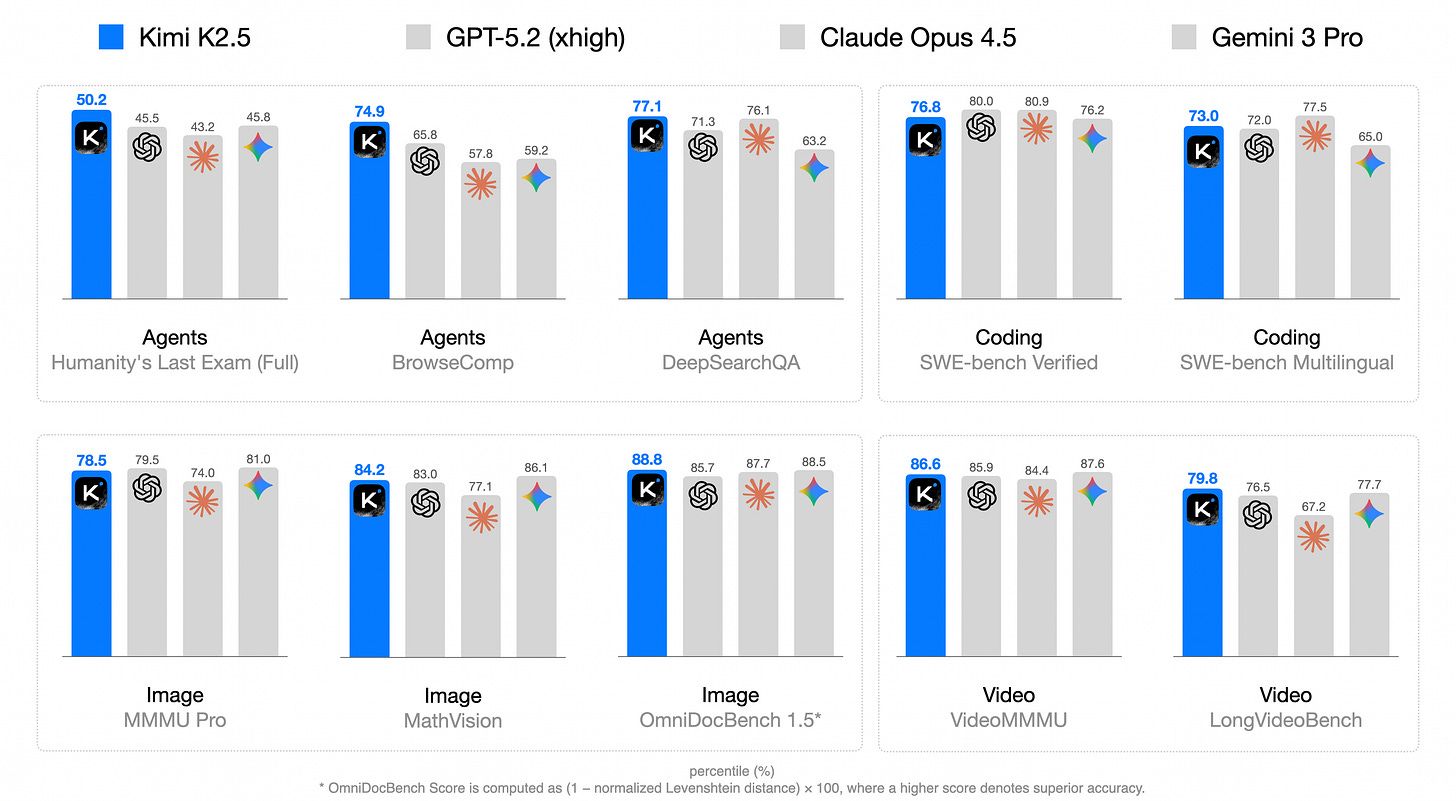

→ Kimi K2.5 outperforms GPT-5.2 on the Humanity's Last Exam benchmark. An open weight model. 50.2% with tools versus GPT-5.2's 45.5%. Released January 27 by Moonshot AI. 1 trillion total parameters, 32 billion active per token. Priced at $0.60/$3.00 per million tokens, roughly one-fifth of Claude Opus 4.6. (Hugging Face / Maxime Labonne)

→ Qwen 3.5 edges out Claude Opus 4.6 on LiveCodeBench. 85.33% versus 84.68%. An open weight model beating the leading closed frontier model on a real world coding benchmark. Available for free download under Apache 2.0. (Maniac AI)

→ Seedance 2.0 went globally viral and spooked Hollywood in the same week. Tom Cruise fighting kung fu masters generated in minutes. The American Motion Picture Association issued a formal copyright infringement statement. Elon Musk publicly praised it on X. Sora still isn't widely available. (CNN)

→ Doubao 2.0 matches GPT-5.2 and Gemini 3 Pro. At roughly one tenth the inference cost. ByteDance released it on Valentine's Day, timed deliberately ahead of Lunar New Year, after being caught off guard by DeepSeek's viral moment during the same holiday last year. For enterprises running agentic workflows at scale, the cost reduction changes the unit economics entirely. (Reuters via Taipei Times)

FILM ROOM

The winner isn't always the fastest at the start

I’ve been following F1 long enough to know that the car that wins in March rarely wins in November.

The regulations stay the same. The physics don't change. What changes is the development rate - how fast each team iterates between races, extracts performance from the same rulebook, and compounds small advantages across a full season.

The team that wins isn't always the team that starts fastest. It's the team that improves fastest.

That's the right frame for what happened in the two weeks ending February 16.

What makes it significant is what happened between the versions - in three months or less:

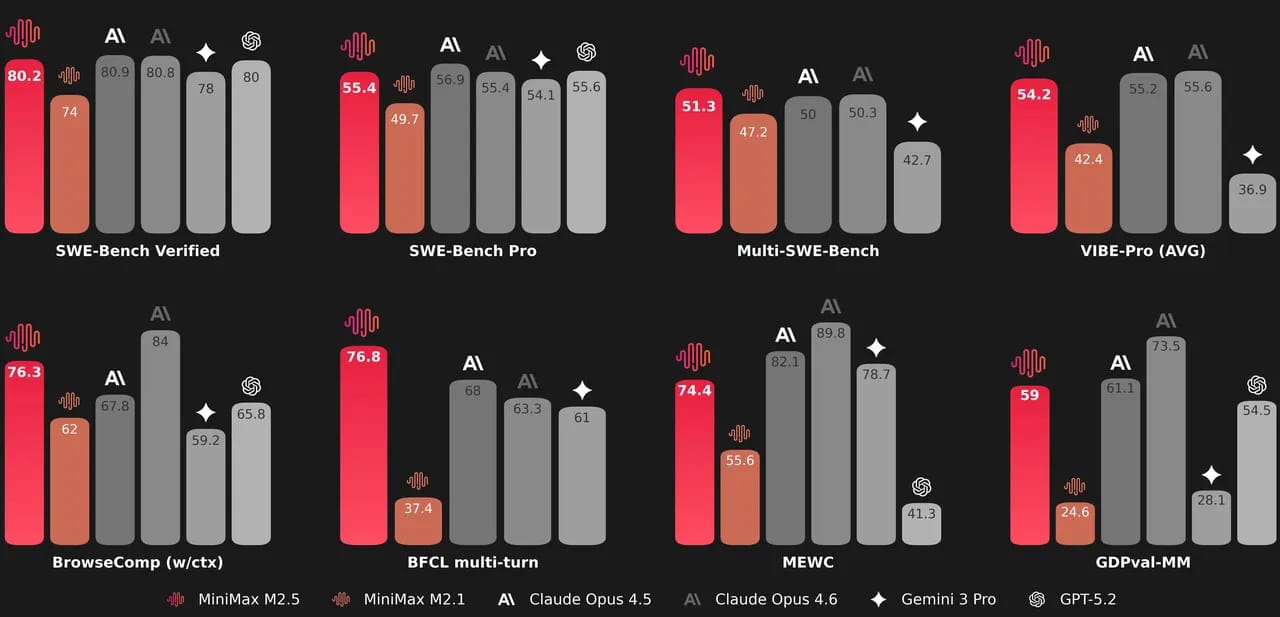

MiniMax M2.1 → M2.5: Task completion speed on SWE Bench up 37%. A coding task that took 33 minutes now takes 22.8 minutes. Wall clock improvement

GLM-4.5 → GLM-5: Training data scaled from 23 trillion to 28.5 trillion tokens. Entirely new reinforcement learning infrastructure built from scratch. Hallucination score improved 35 points on AA Omniscience

Kimi K2 → K2.5: 15 trillion additional multimodal tokens added on top of the existing checkpoint. Native vision capability added to a model that previously only handled text. Priced 85% below Claude Opus 4.6 on input tokens

via Interconnects

via Interconects

What does this speed mean for anyone building on top of these models?

In F1, championship teams don't win by designing the best car once. They win by bringing the most meaningful upgrades across the most races.

Compounding small improvements > a single breakthrough that plateaus.

MiniMax shipped M2, M2.1, and M2.5 in three and a half months. Their improvement rate on SWE Bench over that period outpaced Claude, GPT, and Gemini. The model you evaluate in Feb may look meaningfully different by May - and the team shipping fastest controls what "meaningfully different" means.

Silicon Valley is not standing still either. Claude Opus 4.6 still leads on most composite benchmarks (we love Claude). GPT-5.2 still holds the frontier on several reasoning evaluations. The US labs have more compute, and years of alignment research that doesn't show up in a benchmark table.

But the strategic problem that no benchmark score solves?

OpenAI and Anthropic are built around deliberate pacing - flagship releases, product cycles, controlled rollouts. That's a rational strategy when you're the market leader protecting margin and trust.

The problem: it assumes the competitive threat arrives on a similar cadence.

Increasingly, that’s less true.

When six models ship in two weeks from five separate companies, the enterprise evaluation cycle collapses. Procurement teams can't assess, pilot, and commit before the landscape shifts again.

The default response to evaluation paralysis = stay with what you know. For most global enterprises right now, that means OpenAI or Anthropic. Short term, that's an advantage for the incumbents.

But the longer this cadence holds, the more it erodes the assumption that the best model is always a Silicon Valley one.

And the evidence that assumption is already cracking?

Chinese open source models went from 1.2% of global usage in late 2024 to nearly 30% in 2025, measured across 100 trillion tokens.

Airbnb's AI customer service agent- used by 50% of US customers, cutting resolution time from three hours to six seconds — runs heavily on Alibaba's Qwen. CEO Brian Chesky's verdict: "very good, fast and cheap." Chamath Palihapitiya moved his company's workflows from Amazon Bedrock to Moonshot's Kimi K2. Mira Murati's Thinking Machines Lab ($12B val.) released a tool supporting eight Qwen models.

By July 2025, China had overtaken the US in cumulative open model downloads, according to a16z.

Once that assumption breaks - once an enterprise in Southeast Asia, the Middle East, or Latin America defaults to a model that's 85% as capable at 15% of the cost - the switching cost compounds in the other direction.

That's the race we’re watching. Not the benchmark table but the assumption underneath.

In F1, they call it the development race. The constructor who wins it doesn't always have the fastest car at the start of the season.

They have the fastest rate of change.

Or around here, we call it delta 🙂

STAT OF THE WEEK

20

The number of days across which six major model releases landed, covering video generation, coding, agentic reasoning, multimodal enterprise, and open weight infras. Each priced significantly below SF counterparts.

See you next week,

Jen, live from Shenzhen